Turning Data into Velocity: Caper's Edge and Cloud Data Flywheel with Capsight

Key Contributors: Youming Luo, Andrew Tanner, Matas Sriubiskis, Sylvia Lin, Sikun Zhu, Lei Li, Xiao Zhou

Introduction

Caper is Instacart’s AI-powered smart cart that provides customers with a fast, seamless, and intuitive shopping experience. We achieve this through computer vision and multi-sensor fusion to power accurate product recognition and effortless checkout. Delivering this experience requires Caper’s AI models to understand what truly happens in stores — the movement, intention, and decisions unfolding across every grocery aisle.

Historically, our ability to learn from production environments was limited. Even though the carts were deployed in stores, we lacked a scalable way to collect real‑world data that would allow us to rapidly iterate and improve our models. This resulted in three core challenges:

- Scalable Onboard Observability: We had little visibility into what was happening on the cart, in the stores. When something went wrong, it was hard to understand or reproduce the scenario. At the same time, each cart generates gigabytes of multimodal data, from sources such as cameras, weight sensors, and localization sensors. We needed a centralized way to capture key moments so the team could clearly understand what the cart experiences, how users interact with it, and where to improve — all while maintaining a magical user experience and minimal impact on the network.

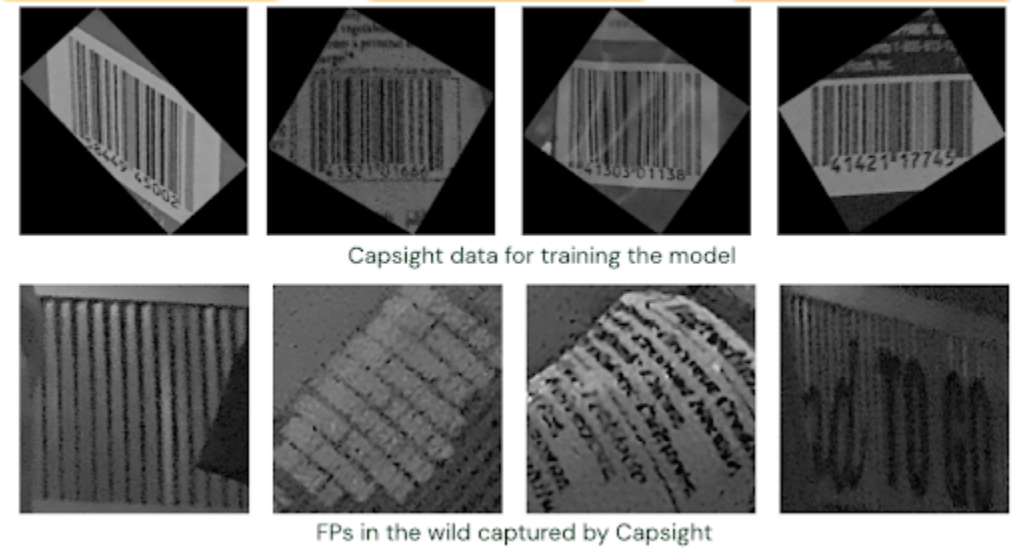

- Data Quality and Diversity: Our models were primarily trained on manually-collected data that didn’t fully reflect the complexity of real-world stores, including lighting changes, occlusions, damaged packaging, motion blur, unusual angles, and store-specific products. This gap can hurt the user experience. We need a reliable way to systematically gather high-quality, diverse production data that could increase models’ robustness and accuracy.

- End-to-End Model Cycle Time: Turning production data into model updates required manual data cleaning, triage, labelling and training. This made the end‑to‑end model development cycle not only slow but also expensive. We want a rapid, automated way to learn from the vast diversity of real‑world data so the full end‑to‑end model iteration cycle can improve on a weekly cadence instead of every month, and so the cost of iteration would not grow linearly with deployment size.

To address these challenges, we built Capsight, an end-to-end platform that transforms our fleet of Caper carts into a distributed data collection and model improvement engine, enabling our carts to get smarter on their own over time.

The Capsight Ecosystem: Core Components

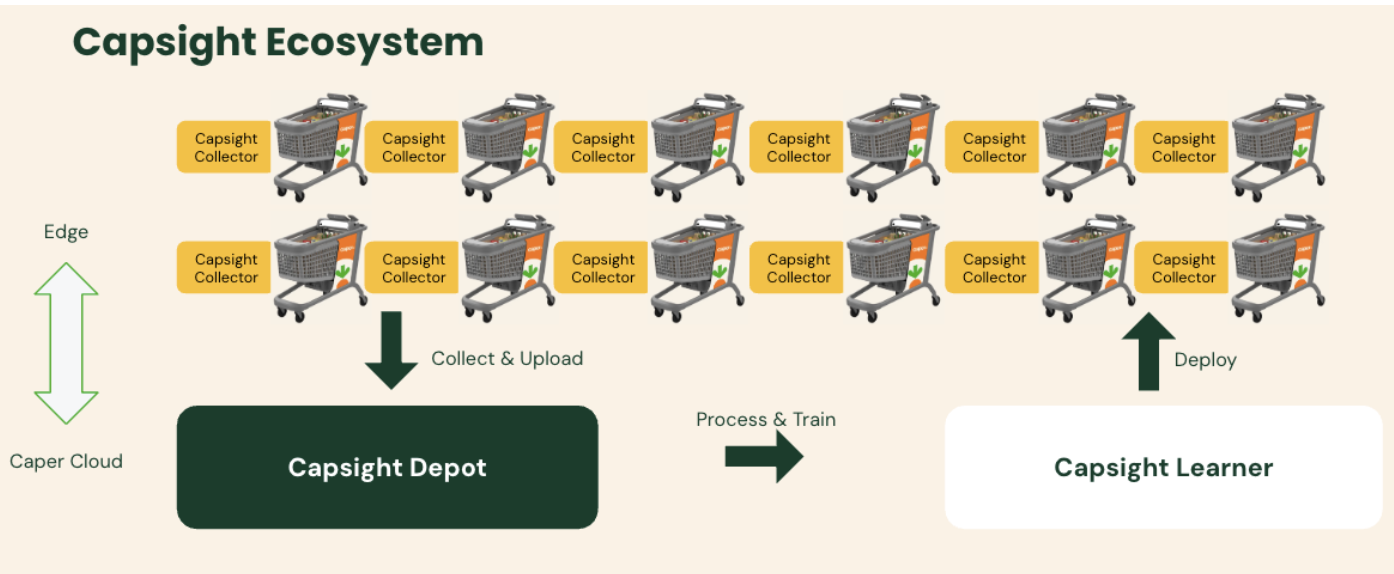

Capsight creates a continuous feedback loop connecting live edge-captured data directly to our ML training workflow. It consists of three components that work together to create our data flywheel:

Collect → Manage → Label → Train → Deploy

- Capsight Collector: An intelligent, on-device agent that captures high-value data from a variety of cart sensors (camera, weight, location, etc.).

- Capsight Depot: A centralized cloud platform for data management. It ingests, processes, and indexes all incoming data while performing data cleaning and quality processing, making the data searchable, explorable, and ready for annotation.

- Capsight Learner: A distributed training platform that consumes curated datasets from the Depot to train, evaluate, and accelerate model iteration.

Capsight Collector: Intelligent Capture on the Edge

The Capsight Collector is the foundation of the entire system: the cart’s central data-gathering agent. Its mission is to capture a holistic view of every interaction by collecting synchronized data from all of the cart’s sensors.

While the initial phase focuses on high-value computer vision data from the cameras, the Collector is designed as a multi-modal platform. We integrated other crucial data streams, such as weight data from the scale and location data within the store, to build a complete picture of each event.

To achieve this without impacting cart performance or overwhelming store networks, we solved several key engineering challenges:

- Intelligent, Trigger-Based Capture: To avoid collecting terabytes of irrelevant data, the Collector operates on a trigger-based system. It only begins capturing data when an important event is detected. The initial trigger is a combination of an activity signal (like a hand motion) and a recognized barcode, with more signals being developed. This dual signal gives us high confidence that a meaningful interaction is occurring. The sensitivity of this trigger is an important trade-off. Collecting useless data is expensive and increases noise, but missing signals decreases training input.

- Optimized On-Device Processing: We leverage dedicated hardware for video encoding, which ensures the entire collection process runs with zero performance regression on the cart’s primary AI tasks. We also built a dedicated communication protocol so that weight and location data can be collected without any performance degradation.

- Resilient Uploading Workflow: The collected data is stored locally on the cart’s storage device. The Uploader is designed to work seamlessly within the store environment, carefully managing upload timing and bandwidth to avoid any impact on retailer operations or network performance. To prevent disk space issues, it includes a storage check to pause collection if usage exceeds a configurable threshold and an auto-cleanup mechanism to remove the oldest files if the upload fails.

Capsight Depot: Ingestion, Curation, and AI-Assisted Annotation

Once the Collector uploads the raw sensor packages, the Capsight Depot begins the process of transforming these massive volumes of raw files into structured, high-quality training datasets:

- Processing: A distributed data processing system ingests the raw files, extracts metadata, and performs quality checks to ensure the data is consistent and ready for downstream use.

- Indexing, Search and Visualization: All data and metadata are securely stored, indexed, and enriched to support search and deeper semantic exploration through a user‑friendly web interface. This allows the team to quickly investigate production scenarios by filtering relevant metadata and immediately accessing corresponding videos and logs.

- AI-Accelerated Annotation and Curation: A key function of the Depot is preparing data for annotation. However, as Capsight scales to millions of images daily, manual labeling becomes a significant bottleneck in both cost and time. To break this bottleneck, we’ve integrated a powerful Vision Language Model (VLM)-based pre-labeling service directly into the Depot and Labelling Platform. Instead of sending raw images for manual annotation, the pipeline first filters out empty background images. Then, a VLM, in combination with our teacher models, automatically generates high-quality pre-labels for items and barcodes. These pre-labeled images are then sent to human annotators for rapid correction rather than slow, from-scratch creation. This AI-assisted approach is projected to reduce annotation costs by over 70% and cut down a multi-day labeling task to just a few hours. It even allows us to efficiently clean errors from our historical ground truth data.

Capsight Learner: Closing the Training Loop

With a curated, labeled dataset ready in the Depot, the Capsight Learner completes the flywheel. This distributed, Ray-based training platform automates the process of consuming these datasets to train new model versions. An automated evaluation pipeline benchmarks models against standardized test sets, ensuring only validated improvements make it to production. By providing a direct path from labeled data to a trained model, the Learner dramatically accelerates our model training cycle. As a result, we reduced the model training stage from one week to two days.

Impact: The Flywheel in Motion

By closing the data loop, Capsight has transformed our AI development process from a reactive, manual effort to a proactive, automated one. Providing a measurable and immediate impact for retailers and their customers.

We’re already seeing significant accuracy gains. Within just weeks of deployment, we collected enough diverse, real-world data to train improved models that showed more than 5% improvement in accuracy, with continued gains as the deployment scales. Our dataset is now richer and systematically captures edge cases, lighting variations, and store-specific products that make our AI models more robust.

The iteration cycle is also dramatically faster. The full loop of collecting data, labeling, training, and releasing a model used to take about a month, and now completes in a week. Customers can benefit from these improvements much sooner.

Future Work: What Capsight Unlocks

Our journey with Capsight is just beginning. Next, we’re expanding beyond vision to full sensor fusion, combining camera, weight, motion, and location data. This richer, multi-modal dataset will fuel a foundation model capable of understanding real-world store environments across vision, motion, weight, and behavior.

This foundation model enables powerful capabilities:

- Detecting complex multi-item interactions and intent

- Improving location-based experiences

- Automatically surfacing the most valuable data for model improvements

As the system scales, we’re also optimizing costs: from multi-attribute extraction in a single pass to efficient VLM inference, ensuring that Capsight grows smarter and more scalable with every iteration.

Conclusion

Capsight represents a step change in how we build and ship AI with Caper at Instacart. By wiring observability and data feedback directly into our ML loop, we’ve created a closed-loop system that accelerates learning, boosts model accuracy, and strengthens reliability across our in-store retailer technology.

With higher accuracy, faster debugging, and dramatically shorter iteration cycles, Capsight allows retailers and their customers to feel improvements as soon as they’re made. It transforms real‑world data into a continuous innovation engine — one that strengthens Caper today and lays the foundation for the next generation of in‑store AI.

Instacart

Author

Instacart is the leading grocery technology company in North America, partnering with more than 1,800 national, regional, and local retail banners to deliver from more than 100,000 stores across more than 15,000 cities in North America. To read more Instacart posts, you can browse the company blog or search by keyword using the search bar at the top of the page.